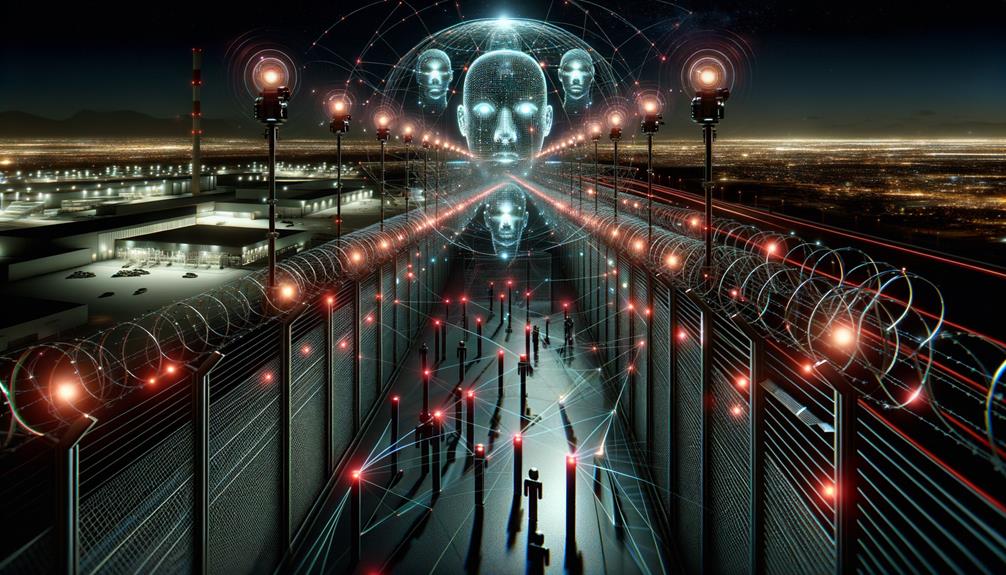

AI is revolutionizing federal agency surveillance, turning once-unthinkable capabilities into reality. Federal agencies like the NSA and DHS deploy AI for enhanced intelligence gathering and border security. However, this tech-driven efficiency isn't all sunshine. AI can be biased and discriminatory, creating privacy nightmares. Meanwhile, transparency lags behind. Regulations? They struggle to keep up. Data protection is a grand challenge, amplified by AI's voracious appetite for information. Curious about the balance between security and intrusion?

Key Takeaways

- AI enhances federal surveillance capabilities with automated data processing and analysis, improving efficiency beyond what was possible a decade ago.

- The integration of AI in surveillance presents significant privacy risks due to rapid data processing and potential lack of human oversight.

- AI systems in federal surveillance face ethical concerns, including algorithmic bias and disproportionate impacts on marginalized communities.

- Transparency and accountability in AI-driven surveillance practices are demanded to address public skepticism and protect civil liberties.

- Balancing the benefits of AI for security with robust privacy protection remains a critical challenge for federal agencies.

While federal agencies revel in their shiny new AI toys, the rest of us watch with a mix of awe and dread. AI tools have transformed surveillance capabilities, automating data processing and analysis with an efficiency that would have been unthinkable a decade ago. Federal agencies, from the NSA to the Department of Homeland Security, are knee-deep in AI, using it for everything from intelligence gathering to border security. The potential is enormous. But so are the risks.

AI Ethics, anyone? The abuse potential of AI in surveillance is not just a theoretical concern. It's reality. Public skepticism isn't just a passing phase; it's a scream for Surveillance Transparency. AI systems, powerful as they are, can identify patterns and anomalies in large data sets with startling precision. But this power comes at a cost. Bias in AI algorithms is a persistent issue, leading to discriminatory outcomes that disproportionately affect marginalized communities. Imagine being wrongly flagged in a surveillance net—terrifying, isn't it?

Then there's the elephant in the room: privacy. AI amplifies privacy risks like never before, gobbling up data faster than you can say "Privacy Act of 1974." Legislation struggles to keep up, and the law sometimes feels like a relic next to cutting-edge AI systems. Sure, these systems enhance surveillance efficiency, but at what cost? The potential for biased outputs raises significant civil liberties concerns, and the lack of human oversight in AI-driven decisions is unnerving. No one wants to be a victim of an algorithmic error, yet it happens. The data universe doubles every two years, meaning that the amount of information available for AI systems to process is increasing at an unprecedented rate, further complicating privacy concerns. Additionally, the integration of AI threat detection software in surveillance systems can enhance the capability to identify risks and respond in real-time, but it also heightens concerns about privacy and oversight.

Federal agencies face the Herculean task of balancing privacy with security. Ongoing regulations are essential, but they're akin to a Band-Aid on a bullet wound without rigorous enforcement. The ACLU isn't backing down, pushing for more transparency in NSA's AI use. They're right to do so. After all, isn't transparency the bedrock of accountability? The NSA's integration of AI into its operations for several years has led to increased scrutiny over the potential for privacy invasions and wrongful investigations.

And let's not forget the technical side. AI relies on vast data sets to improve, combing through networks with relentless efficiency to sniff out cybersecurity threats. It's a double-edged sword. The integration of AI enhances data analysis capabilities, sure, but it also raises the stakes for data protection. Balancing AI's benefits with the need for robust data protection remains a challenge.

In this brave new world of AI-driven surveillance, the line between security and intrusion blurs. Federal agencies may have their shiny toys, but the rest of us are left grappling with the implications—wary and watchful.

References

- https://www.aclu.org/news/national-security/how-is-one-of-americas-biggest-spy-agencies-using-ai-were-suing-to-find-out

- https://www.brookings.edu/articles/protecting-privacy-in-an-ai-driven-world/

- https://epic.org/issues/ai/government-use-of-ai/

- https://www.lawfaremedia.org/article/using-ai-to-improve-the-government-without-violating-the-privacy-act

- https://www.dhs.gov/ai