China has escalated its surveillance game. Using AI derived from Meta's Llama model, it snoops on anti-China sentiments in Western social media. Platforms like Twitter and Facebook, beware. Real-time reports are fed straight to authorities. Ethical nightmare? You bet. Personal privacy, trampled. It's a digital panopticon—no escape. Meanwhile, the West is embroiled in AI's ethics debate. But, there's more to this tale of a global surveillance saga.

Key Takeaways

- China's AI tool, based on Meta's Llama model, monitors anti-Chinese social media posts in Western countries.

- The tool provides real-time reports of protests and dissident activities to Chinese authorities.

- It scans platforms like X, Facebook, YouTube, Instagram, Telegram, and Reddit, raising privacy concerns.

- The AI's misuse poses ethical dilemmas, especially in tracking Uyghur rights protests.

- OpenAI banned related accounts, highlighting the need for international cooperation against AI misuse.

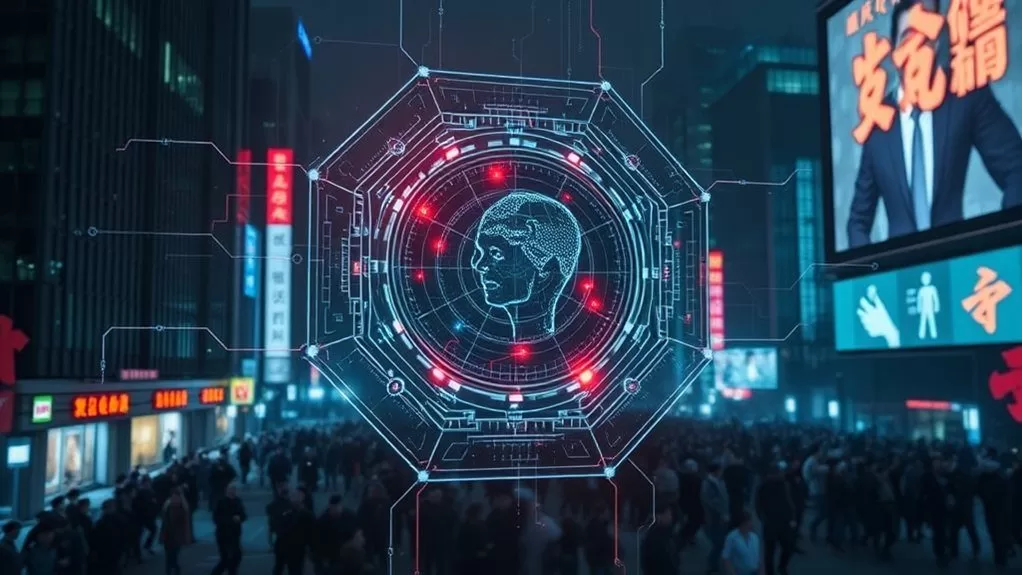

In a world obsessed with surveillance, China's latest AI tool, based on Meta's Llama model, takes spying to a whole new level. The surveillance tool, developed by Chinese security operations, is designed to monitor and collect real-time reports on anti-Chinese posts across various social media platforms. It primarily targets Western countries, focusing on protests and dissident activities, feeding insights directly to Chinese authorities. The geographical focus and the meticulous monitoring have sparked considerable privacy concerns, as well as ethical implications about the misuse of AI technology.

The tool's functionality is both impressive and alarming. It scans content from platforms like X (formerly Twitter), Facebook, YouTube, Instagram, Telegram, and Reddit with uncanny precision. Its ability to analyze vast amounts of data in real-time and distribute insights to embassies and intelligence agencies is nothing short of a digital panopticon. The ethical implications are glaring. While the technology showcases China's advancements in integrating AI for surveillance, it simultaneously raises the question: should such power be wielded without oversight? The potential for misuse is vast, with the tool already being used to track Uyghur rights protests and other dissident activities. Companies must implement measures to safeguard privacy and uphold ethical standards in facial recognition technology, including transparency in data collection and storage.

China's "Peer Review" campaign, an ironic nod to its systematic surveillance, employs non-OpenAI models but cleverly utilizes OpenAI tools for debugging and development. The campaign aligns with other actions like "Sponsored Discontent," which targets Chinese dissidents globally, further illustrating the political impact and ethical dilemmas posed by AI misuse. OpenAI researchers emphasize the need for vigilance in AI technology applications, underscoring that the capabilities of AI can both enable and combat malicious activities. The global surveillance concerns are not mere paranoia; they're tangible, as AI becomes a double-edged sword—capable of both safeguarding and infringing. China's early start in AI development has positioned it to potentially challenge Western leadership, making the implications of this tool even more significant.

China's "Peer Review" cleverly exploits AI, spotlighting ethical dilemmas and global surveillance concerns.

OpenAI, having recognized the policy violations, banned accounts involved in the development of this tool. Yet, the cat's out of the bag. AI's potential for both good and evil is undeniable. While OpenAI's response highlights the need for international cooperation to counter such misuse, it also underscores the broader implications of AI in geopolitical contexts. China's use of AI for surveillance purposes contributes considerably to the competitive landscape in AI research between the East and the West, intensifying the global AI competition.

In the grand scheme, the AI surveillance tool highlights a stark reality: technology, while innovative, can easily cross ethical boundaries. The tool's capabilities exemplify the fine line between security and privacy invasion. It's a classic case of technological advancements outpacing ethical considerations, leaving us to ponder the true cost of progress. But hey, who needs privacy when you've got cutting-edge surveillance, right?

Final Thoughts

The Chinese AI surveillance tool is a marvel of modern technology, tracking anti-China posts with uncanny accuracy. Impressive, yet unsettling. On one hand, it showcases advanced capabilities—kudos to innovation. But, it's a big brother nightmare for free speech, leaving critics in a digital chokehold. Pros and cons collide. Precision meets oppression. A tool both brilliant and chilling. The power to monitor is here, wielded with surgical precision. Some call it security; others see a digital dystopia unfolding.

References

- https://www.business-humanrights.org/en/latest-news/china-openai-uncovers-chinese-security-operations-ai-surveillance-tool-monitoring-anti-chinese-social-media-posts/

- https://www.israelhayom.com/2025/03/04/deepseeks-ai-moment-china-has-a-built-in-advantage-but-not-for-long/

- https://mezha.media/en/news/openai-found-evidence-of-china-using-ai-to-monitor-anti-china-posts-on-social-media-300016/

- https://thehackernews.com/2025/02/openai-bans-accounts-misusing-chatgpt.html

- https://economictimes.com/tech/artificial-intelligence/openai-bans-chinese-accounts-for-social-media-surveillance/articleshow/118497818.cms